Choose one:

a) This is reassuring, because our algorithmic overlords are still mostly quite stupid, or b) they are lulling us into a false sense of security until it is too late?

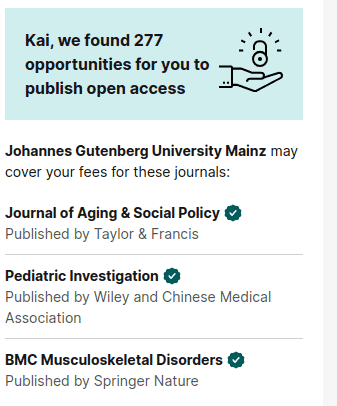

I lean towards b), because this was shown to me on RG, a site that makes it its actual business to know exactly what I write about and in which outlets I publish.