Everyone in Germany is very excited, because the AfD had some good polling results in a row while the coalition’s individual and collective ratings are abysmal. Some very recent surveys had the AfD at 18 per cent. Apart from the symbolism, that puts them ahead of the Greens and on par with the SPD.

Normally, I’m the first to tell everybody about this being but one (or two, or three) single poll(s), the concept of survey error, and the sheer arbitrariness of the comparison – after all, the AfD and the Greens are polar opposites that do not compete for the same voters. But today’s DeutschlandTrend (by Infratest dimap) put them at 18 per cent, too, and that made think again: is this apparent rise real? And how could I find out?

Simon Jackman’s groundbreaking work on poll-pooling is the inspiration behind some pretty ambitious Bayesian models on the internet. I’ve toyed with these before, with very variable success, so I thought I might try something more pedestrian.

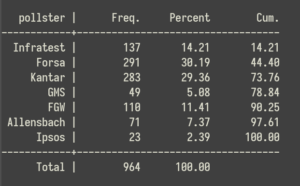

Thanks to dawum.de and their API, I could quickly download the headline findings for a cool 964 surveys that were carried out by seven reputable pollsters since September 24, 2017 (the Bundestag election before the last), filtering out many more by other companies that I deemed less reliable.

Forsa and Kantar each contribute about 30 per cent of the surveys. Infratest and FGW, which run very prominent survey series for Germany’s public broadcasters, collectively provide another quarter.

I expanded the data to create a file with more than 1.6 million records, one for each original respondent. While all individual information is lost, each record contains an identifier for the the poll, a nominal collection date (the mid-point of the actual data collection period), and information on the polling company.

Pooling models often include some autoregressive term and/or trend components to deal with time. I had a vague idea involving a three-level model with a fancy structure for the random terms, but that proved impractical and so I did something really pedestrian: I estimated an empty model with standard errors clustered on surveys and two sets of fixed effects: the first encodes the company, the second corresponds to 75 consecutive four-week periods, beginning with the day after the 2017 election.

The purpose of the first set is to control for house effects, i.e. the systematic differences between polling companies.

But why fixed effects for fixed periods of time? Because this allows for non-parametric estimates and hence a flexible pattern of AfD support over time.

Why four weeks? Because this is traditionally a long time in politics, and because a period of this length usually encompasses a relatively large number of surveys, allowing for reliable estimates of AfD support during that month. But of course, I could use a shorter or even a longer timeframe.

Speaking of arbitrariness, assigning every respondent to the survey’s mid-point is not ideal, and assigning the survey to a single month is possibly even worse if the mid-point is close to the beginning or end of the month. It would be better to have some moving average of the fixed effects, but I could not think of a good (or any) way to do this.

One minor finding is that the house effects are all significant and that one is particularly pronounced: Forsa, who historically were seen as close to the SPD, post consistently lower levels of AfD support than other companies.

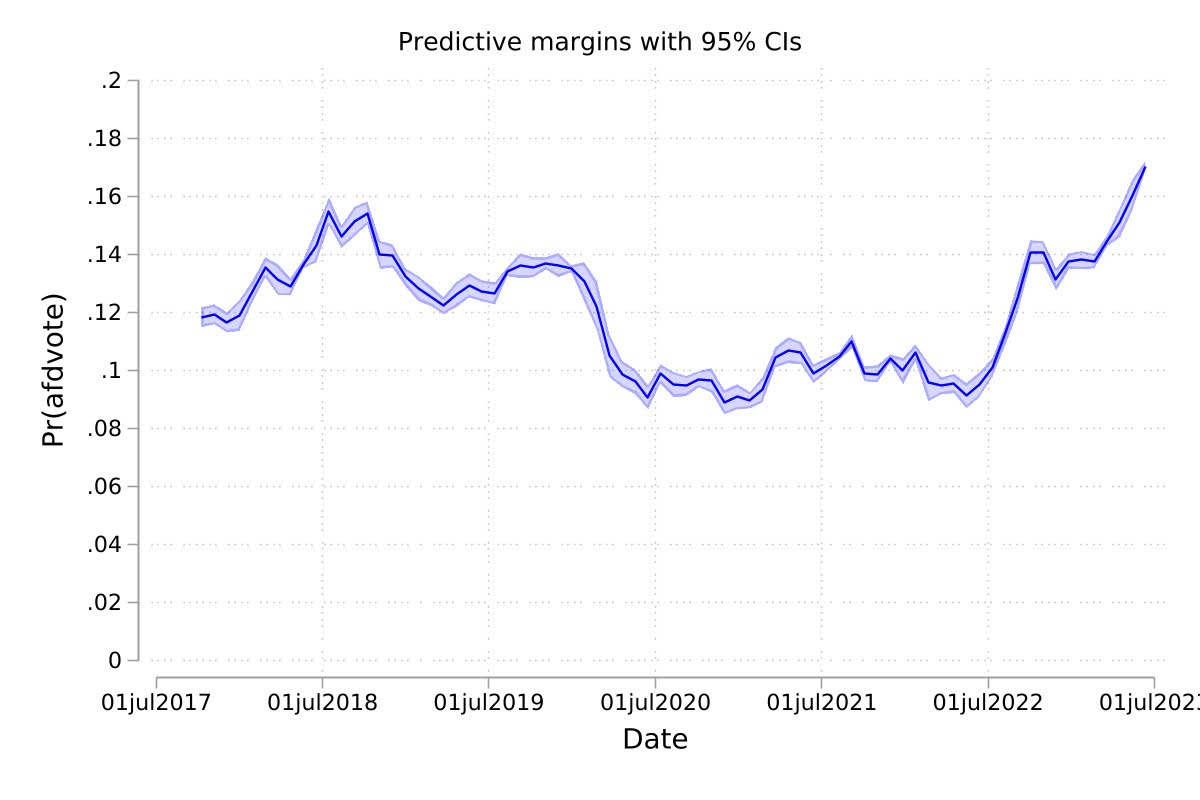

But of course, the main purpose of the whole exercise is to get per-month model-based estimates of AfD support. To this end, I use the quasi-magical margins command and then plot the monthly estimates. Here is the result:

If we can trust the model, the graph suggests that we have relatively precise estimates of AfD support for most months.

And if we chose to trust the estimates, it seems clear that the long period of stagnation that began with the pandemic finally came to an end in the autumn of 2022, when support jumped up by about four points. Over the last months, it has gone up further, higher than ever before.

So yes, it seems that the AfD’s surge is real. We shall see how long it lasts.

4 thoughts on “Is the AfD’s surge in the polls real?”

Reposts